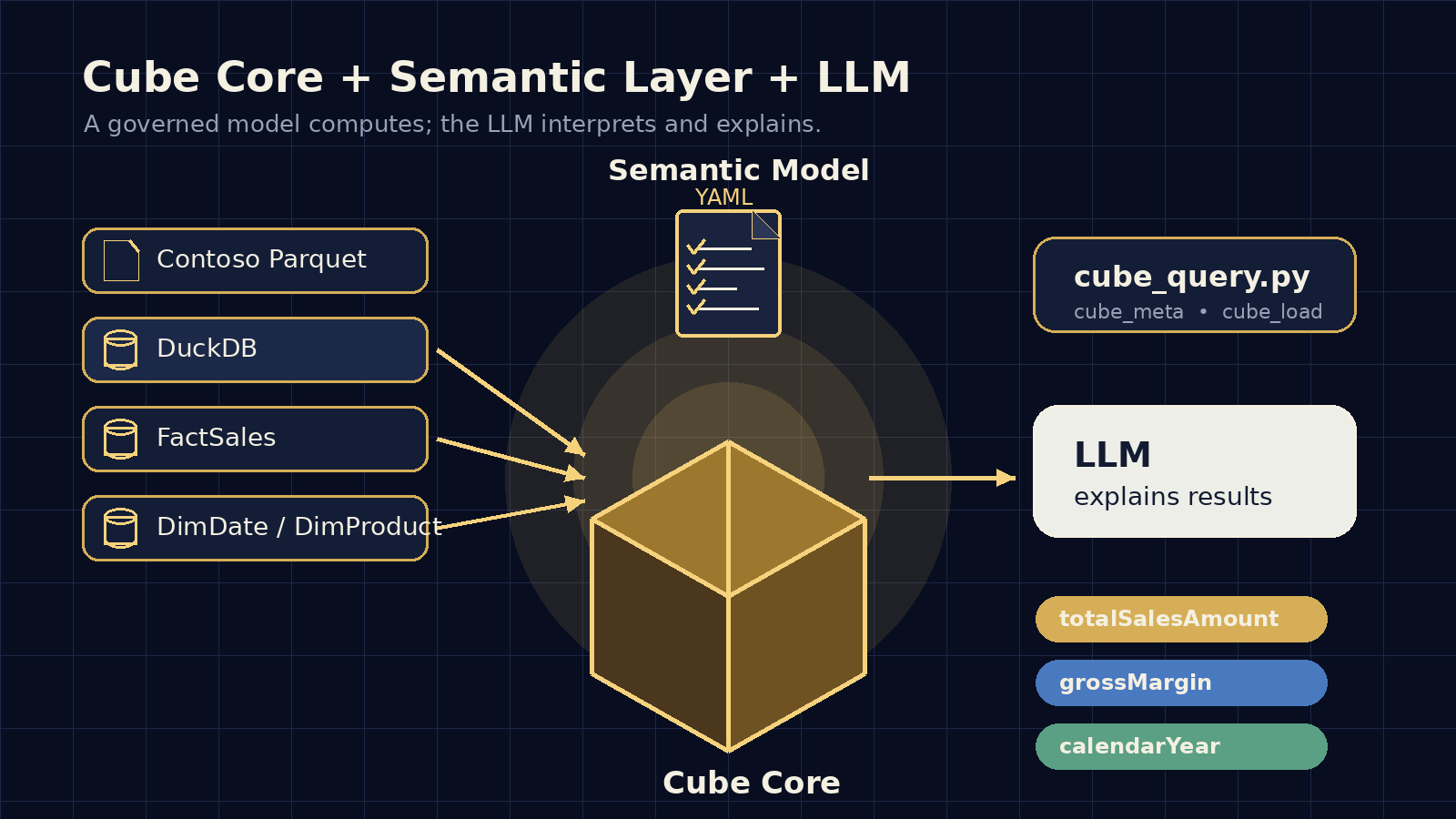

How I Understood Cube Core: Semantic Layer, Semantic Model, and Deterministic LLM Results

A didactic guide to remember how to build a semantic layer with Cube Core, DuckDB, and Contoso: YAML semantics, Python helper, notebook implementation, and LLM usage for deterministic analytics.